This is just a stupid shader trick question, nothing very practical, so I'm just asking in case anybody enjoys a puzzle like this. I don't want anybody to spend any effort on it if it isn't enjoyable for them.

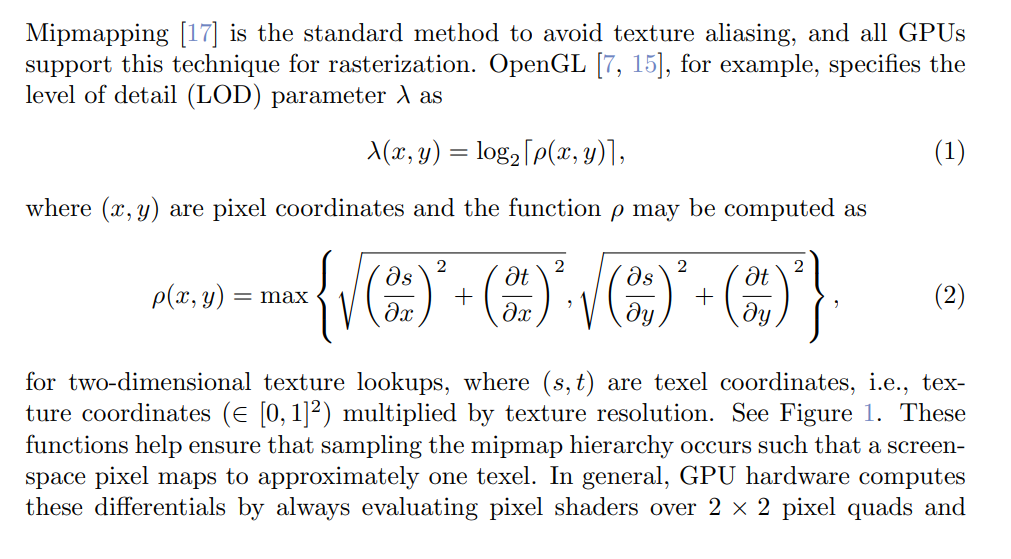

I used to do a bit of HLSL, but haven't for a while-- have been trying to improve my modelling in Blender. One thing that I can't do with Blender's materials is get the miplevel, and I kind of miss it from my HLSL days. (And I think, given a miplevel, I could get a dUV/dScreenPos, which would be useful for some things).

But after seeing somebody implementing an edge detect using Blender's bump nodes, to implicitly get neighboring samples, it occurs to me that maybe this is possible via enough trickery.

Let's say you have a texture that you can make-- any image you want, whatever's useful for the task-- and a bump-mapped normal of that texture, and a pre-bump normal. Texture uses filtered lookups. Is it possible to recover the miplevel used for those lookups from the difference between the bump-mapped normal and the pre-bump normal?